Category | Quality Management

Last Updated On 07/04/2026

Artificial Intelligence is no longer judged by how intelligent it is, it’s judged by how responsibly it behaves.

In the last few years, AI systems have influenced credit approvals, medical diagnoses, insurance pricing, recruitment decisions, cybersecurity defenses, and even public policy. One flawed model can impact thousands, sometimes millions, of lives in seconds. That scale of impact has shifted the conversation from “Can we build it?” to “Should we deploy it this way?”

Organizations today face a new pressure: prove that their AI is fair, transparent, secure, and accountable. Regulators are watching. Customers are questioning. Boards are demanding governance. And investors are assessing ethical risk alongside financial performance.

This raises an important question: How does ISO 42001 define Responsible AI?

If you are an AI leader, compliance officer, IT manager, risk professional, or business executive exploring AI governance frameworks, this topic is directly relevant to you. As AI systems become more embedded in hiring, healthcare, finance, cybersecurity, and public services, organizations must ensure they are building trustworthy AI systems backed by structured responsible AI governance.

That’s where ISO/IEC 42001 steps in.

Let’s break it down in simple, practical terms.

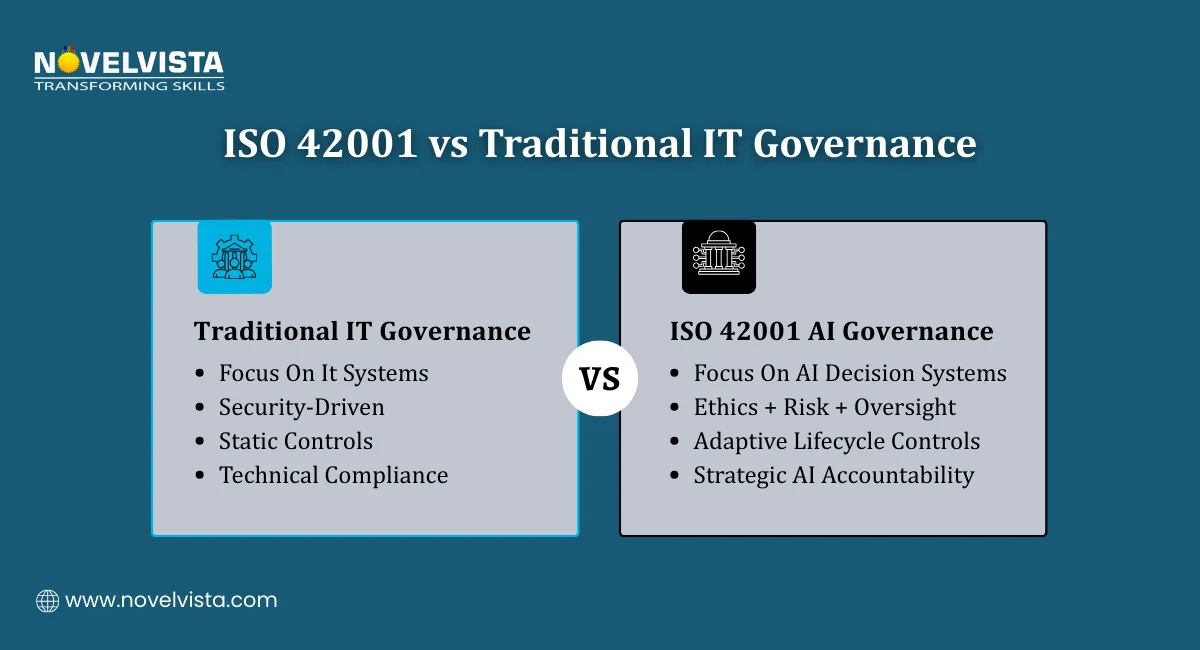

ISO/IEC 42001 is the world’s first international standard created specifically for Artificial Intelligence Management Systems (AIMS), providing a formal structure for governing AI at the organizational level. Similar to how ISO/IEC 27001 addresses information security and ISO 9001 focuses on quality management, ISO 42001 establishes a systematic framework to ensure AI is managed responsibly. Rather than concentrating only on technical design, it applies a management system approach built on policies, risk controls, documented processes, and leadership accountability. So when organizations ask, how does ISO 42001 define responsible AI?, the answer lies not in abstract ethics but in structured, operational governance.

At its core, ISO 42001 defines Responsible AI through a risk-based, lifecycle-driven, governance-focused framework. It ensures AI systems are:

Rather than offering philosophical definitions, ISO 42001 translates Responsible AI into measurable organizational controls.

In simple words, Responsible AI under ISO 42001 means AI systems that are developed, deployed, and managed with documented oversight, risk controls, human supervision, and continuous improvement mechanisms.

This is where responsible AI governance becomes central.

The standard requires organizations to:

So, when organizations ask, how does ISO 42001 define responsible AI? It defines it as a managed system, not just a moral aspiration.

Responsible AI governance starts at the top. ISO 42001 mandates leadership involvement in defining AI policies, objectives, and risk tolerance levels.

Executives cannot delegate responsibility entirely to technical teams. Governance requires board-level visibility and decision-making accountability.

A key element in understanding how ISO 42001 defines responsible AI? lies in its risk assessment process.

Organizations must evaluate:

This structured AI risk assessment ensures trustworthy AI systems are not accidental they are engineered through foresight.

Black-box AI models pose trust challenges. ISO 42001 requires appropriate levels of explainability based on context and impact.

This means:

Transparency strengthens public confidence and regulatory readiness.

Responsible AI governance demands human intervention mechanisms. AI should assist not fully replace, critical decision-making where risks are high.

ISO 42001 encourages:

This ensures automation does not eliminate accountability.

AI systems evolve. Data drifts. Bias can re-emerge. That’s why ISO 42001 integrates continuous monitoring, internal audits, corrective actions, and performance evaluations.

When organizations repeatedly ask, how does ISO 42001 define responsible AI? Continuous improvement is a major part of the answer. Our comprehensive ISO 42001 Exam Strategy Guide helps you structure your preparation effectively, focus on responsible AI governance concepts, and approach the certification exam with confidence.Understand the ISO 42001 exam structure and key domains Master important concepts with simplified explanations Boost your confidence with smart preparation strategies

Creating trustworthy AI systems requires more than testing accuracy. It requires lifecycle governance:

From design to deployment, organizations must:

ISO 42001 requires identifying potential discrimination risks and implementing bias detection mechanisms.

This includes:

Strong data governance underpins responsible AI governance. Controls may align with information security and privacy frameworks to protect sensitive data.

Secure data handling reduces reputational and legal risks.

Many countries have introduced AI principles and ethical guidelines, but ISO/IEC 42001 stands apart for several key reasons. It is certifiable, which means organizations can formally demonstrate compliance; it follows a structured management system approach similar to other ISO standards; and it integrates governance directly with operational controls across the AI lifecycle.

While many frameworks describe what ethical AI should look like in theory, ISO 42001 explains how to operationalize it within an organization through policies, processes, risk assessments, and accountability mechanisms. So when asking how does ISO 42001 defines responsible AI?, the answer lies in its structured, auditable governance model that transforms principles into measurable practice.

This standard is ideal for:

If your organization develops, deploys, or procures AI systems, responsible AI governance is no longer optional.

Global AI regulations are emerging rapidly. ISO 42001 prepares organizations for compliance alignment.

Early risk identification prevents costly failures.

Customers prefer transparent and accountable systems.

Certification signals commitment to ethical AI practices.

Trustworthy AI systems drive sustainable innovation.

So, how does ISO 42001 define responsible AI?

It defines it as a governed, risk-managed, transparent, accountable, and continuously monitored AI management system. Rather than relying on abstract ethics, ISO 42001 embeds responsible AI governance into leadership decisions, operational controls, risk assessments, and audit processes, turning principles into measurable practice.

In a world of rising regulatory scrutiny and reputational risk, this structured approach helps organizations build truly trustworthy AI systems that balance innovation with accountability.

Responsible AI isn’t just about intelligence.

It’s about governance, control, and trust at scale.

Ready to lead Responsible AI governance with authority?

Join NovelVista’s ISO/IEC 42001 Lead Auditor Certification Training and gain practical auditing expertise, real-world AI governance insights, and globally recognized credentials aligned with ISO/IEC 42001. Designed for AI leaders, compliance professionals, risk managers, and auditors, this program equips you with the skills to assess, implement, and strengthen responsible AI governance frameworks while helping organizations build truly trustworthy AI systems.

Start your ISO 42001 Lead Auditor journey today!

Author Details

Course Related To This blog

ISO 42001 Lead Auditor

Confused About Certification?

Get Free Consultation Call

Stay ahead of the curve by tapping into the latest emerging trends and transforming your subscription into a powerful resource. Maximize every feature, unlock exclusive benefits, and ensure you're always one step ahead in your journey to success.